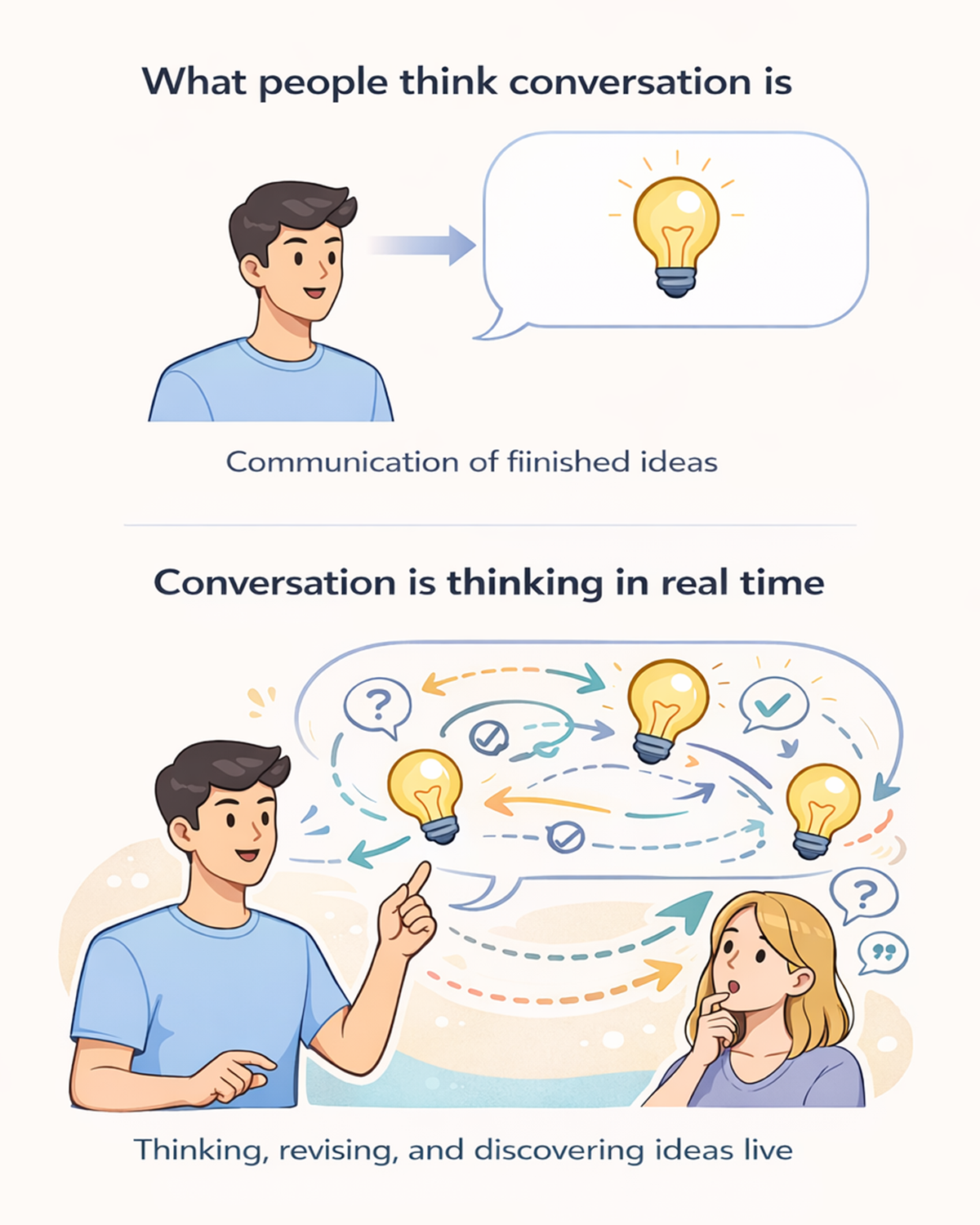

Conversation Is Thinking Output in Real Time

March 18, 2026

Humans do not simply communicate ideas through conversation. We discover them there.

Conversation is thinking output in real time.

When we speak, we are rarely delivering finished ideas. Instead, we are generating them live. Sentences often begin before thoughts are fully formed and may end somewhere different from where they started. We adjust mid-stream, correct ourselves, and refine what we mean as we hear our own words.

Speech is not simply communication.

It is thinking made public.

Understanding this requires looking more closely at how the brain actually produces thought.

Thinking Fast and Thinking Slow

Thinking, Fast and Slow

Daniel Kahneman

Daniel Kahneman famously described two modes of cognition in this landmark work.

System 1 is fast, intuitive, and automatic.

System 2 is slower, deliberate, and analytical.

Most of our mental life emerges from the interaction between these two systems. Fast thinking proposes ideas quickly. Slow thinking monitors and evaluates them, checking for errors and refining conclusions.

Conversation is where these systems collide in real time.

When people speak, System 1 produces language rapidly—phrases, associations, reactions. Meanwhile, System 2 listens to what is being said, evaluating whether it is accurate, coherent, or incomplete.

This internal monitoring is why people often interrupt themselves while speaking:

"Actually, that's not quite right."

"Let me rephrase that."

"What I mean is…"

Speech becomes a live negotiation between fast and slow cognition. The mind drafts thoughts while speaking them.

The sentence is not merely the expression of thought.

The sentence is part of the thinking process itself.

The Brain Does Not Produce One Thought at a Time

A Thousand Brains: A New Theory of Intelligence

Jeff Hawkins

One provocative account of cognition comes from Jeff Hawkins' Thousand Brains Theory of Intelligence.

Rather than a single centralized intelligence producing one coherent answer, Hawkins proposes that the neocortex may operate through many parallel models of the world. Thousands of cortical columns may simultaneously build predictions about objects and environments from different perspectives.

These models constantly compare their interpretations with one another.

In this view, the brain may generate many candidate interpretations at once, rather than producing a single unified thought.

When we speak, language allows one of these interpretations to temporarily surface into expression. But as other internal models update their predictions, the idea may shift.

This theory remains an active hypothesis rather than settled neuroscience. Still, it offers a compelling way to think about how the brain might generate multiple candidate interpretations in parallel. I personally find this model persuasive because it aligns well with how human thinking and conversation often behave in practice.

Conversation reflects parallel cognition resolving itself in real time.

Thought and Speech Are Not the Same System

Although thought and speech are closely connected, they are not identical processes.

Research in cognitive neuroscience suggests that reasoning, perception, prediction, and language production involve multiple interacting neural systems that must coordinate rapidly during conversation.

Thought can occur without language, and in conversation people often begin speaking before deliberation is fully complete.

This separation explains a familiar experience: sometimes we understand something intuitively but struggle to articulate it. Other times we begin explaining something and only discover what we actually think halfway through the explanation.

Language becomes a tool for externalizing cognition, not merely reporting it. The act of speaking also processes information, which itself can influence thinking. Seeing someone's reaction, hearing an interjection, or receiving confirming feedback all affect what we say—and ultimately what we think.

Conversation can function like a kind of external working memory. Ideas move from internal models into words, where they can be examined, challenged, and refined.

When people talk together, they are not just exchanging information.

They are participating in a shared thinking process.

Not All Thinking-in-Speech Is the Same

If conversation is thinking output in real time, it does not follow that every spoken thought is equally uncertain or exploratory.

Human speech exists on a spectrum.

At one end are exploratory thoughts—moments when we are genuinely figuring something out as we speak. Sentences emerge alongside reasoning. Words may shift direction mid-stream as we test and revise ideas.

At the other end are performative thoughts.

These are statements we have produced many times before: a familiar explanation, a rehearsed story, a well-practiced argument, even something as simple as a fast food order. In these cases the thinking occurred earlier. What remains is the performance of a stable cognitive pattern.

A professor explaining a concept they have taught for years, a politician repeating a talking point, or a comedian delivering a practiced story may appear spontaneous, but the cognitive structure behind the speech is already formed.

Even famous speeches sometimes shift from performance to real-time thought.

During Martin Luther King Jr.'s 1963 March on Washington speech, the famous "I Have a Dream" section appears to have emerged after gospel singer Mahalia Jackson called out from behind him, "Tell them about the dream, Martin." King had spoken about the dream in earlier speeches, but in that moment he departed from his prepared remarks and moved into a more improvised and emotionally driven section.

The result became one of the most famous passages in American history — a powerful example of how live speech can move from prepared structure into spontaneous expression.

Conversation as a Cognitive Interface

Even rehearsed speech rarely remains static.

Conversation is interactive. Feedback from listeners—questions, interruptions, confusion, disagreement—can immediately shift a speaker from performance back into exploration.

A prepared explanation may suddenly become a real-time reasoning process.

This constant shift between recall, performance, and discovery is one reason conversations are so cognitively powerful.

Seen this way, conversation is not merely communication.

It is an interface between minds.

Thought emerges internally through fast and slow systems and through many parallel models in the brain. Speech allows those partial ideas to surface into the world, where they interact with other people's thoughts.

In conversation, thinking does not stop before speaking begins.

Speaking is part of thinking.

Ideas do not simply pass between people.

They emerge through the conversation itself.

Where Today's LLMs Fall Short

This is one place where today's basic large language model interactions still fall short.

Human conversation involves fast thinking, slow thinking, constant monitoring, and the ability to revise ideas mid-stream. We interrupt ourselves, repair mistakes, reconsider statements, and explain changes in our thinking while we are speaking.

In their basic form, most LLM interactions still produce responses more like completed outputs than live, self-revising conversational thought.

Human conversation, by contrast, is dynamic and self-correcting.

We think while speaking, speak while thinking, and constantly adjust both processes in response to new information and social feedback.

Large language models may be powerful building blocks for language generation, but reproducing human-like conversation requires more than generating fluent sentences. It requires systems that can monitor, revise, repair, and adapt ideas as they unfold in real time.

That challenge is what motivates JoinIn.ai and what we are building.